Speaking the Language of the Mind: How We Benchmarked AI on Real Human Preferences

A 23M-parameter graph model outperforms GPT-4.1 mini at preference prediction on real user data, with 347x fewer parameters, zero inference cost, and 50 seconds to train.

Part 1: Early Benchmark Results

When you hear the word "apple," your mind doesn't retrieve a single data point. A cascade of associations fires: fruit, red, tree, Newton, iPhone, pie, health, your grandmother's orchard. This spreading activation, where one memory triggers related memories across different domains, is fundamental to how humans think.

But current AI memory systems treat information as isolated points floating in high-dimensional space. Ask about apples and you get the most similar vectors. No associations. No structure. No understanding of how memories relate to each other.

And memory doesn't exist in isolation. It's interleaved with emotion, hierarchical reasoning, and behavioral priors. When you hear "apple," your mind doesn't just recall. It produces a constellation of emotional responses, contextual primes, and action readiness.

Current paradigms are isolated, limited, and abstract. We wanted to build something different. Something that speaks the language of the mind.

This is Part 1 of our Yggdrasil series, covering early benchmark results from an initial sample of users. Part 2, with a larger user base, production environment testing, and improved results, is coming soon.

Current Landscape

State-of-the-art memory systems in AI, including vector databases (e.g., Pinecone, Chroma, FAISS), memory-augmented agents (e.g., MemGPT), and dedicated memory layers (e.g., Mem0, Zep), primarily rely on flat embedding spaces. These store user interactions or knowledge as high-dimensional vectors and retrieve the most similar ones via cosine similarity or k-NN search.

Benchmarks like LOCOMO and LongMemEval reward these systems for accurate regurgitation of past details over extended dialogues. Top performers in 2025-2026 achieve high scores (often 80-90% recall accuracy) by optimizing storage efficiency and retrieval speed.

Yet these benchmarks, and the systems they measure, fall short in translating to real user outcomes. High LOCOMO scores correlate poorly with engagement, trust, or subjective "feeling understood." They optimize for isolated fact retrieval, not the interconnected, associative nature of human thought. Emotions, behavioral priors, and cross-domain links are either ignored or bolted on superficially.

The result: AI that remembers what you said, without understanding how it connects to who you are.

There is a deeper problem here. Plugging memory into an LLM, even sophisticated memory, fundamentally produces an assistant that knows about you. It can recall your preferences, summarize your history, and tailor its responses accordingly. That has real value. But understanding you the way you understand yourself requires something more.

Onairos is building a digital cognitive twin: a system that models how you think, including the associations, hierarchies, and gaps that define how your mind actually organizes experience. A cognitive twin can predict what you'd choose next, explain why, and do it without querying an external model. A memory layer bolted onto an LLM captures the content of your preferences but misses their structure entirely.

We wanted a memory system that thinks relationally, hierarchically, and predictively.

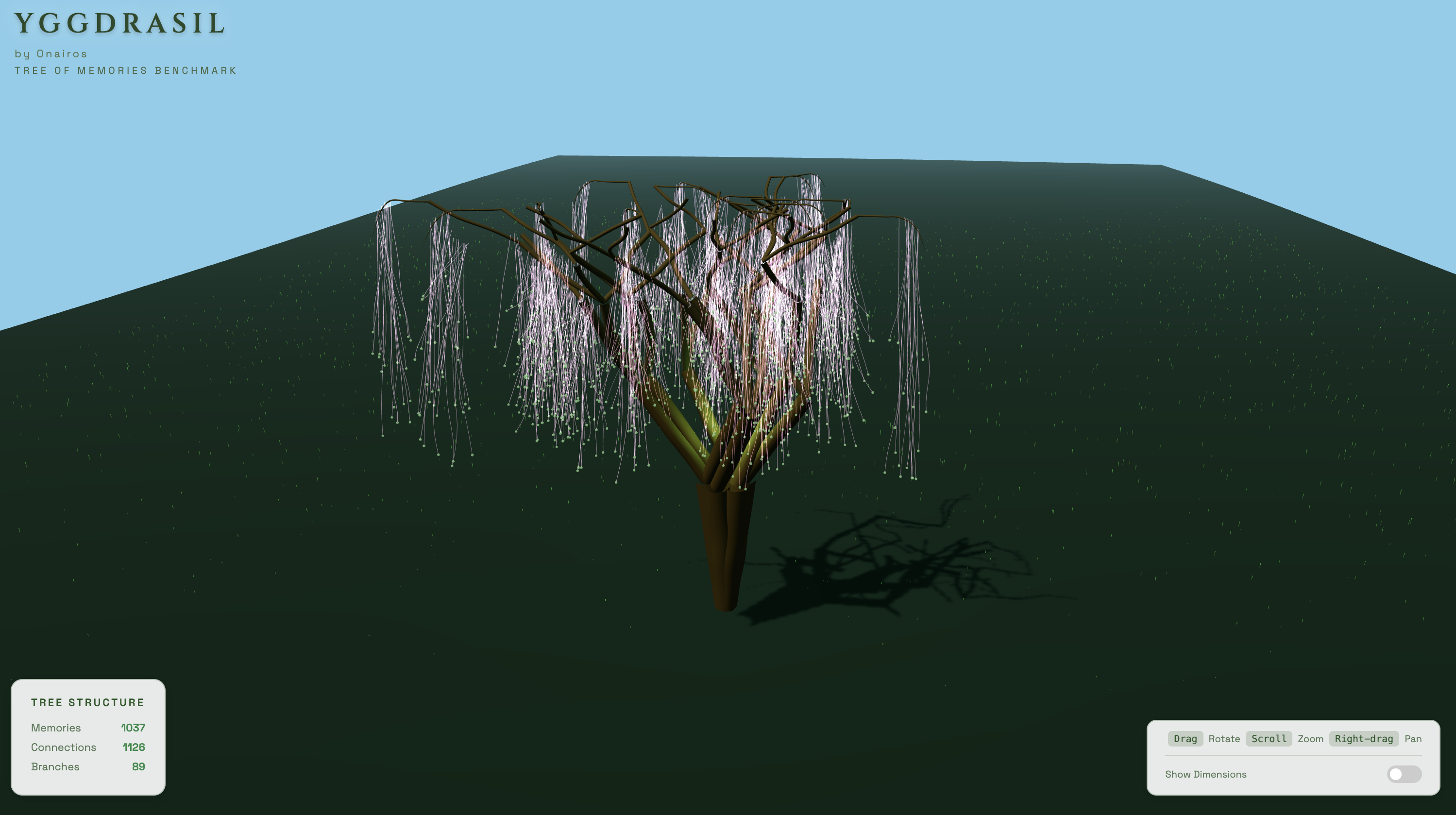

Figure 1: A Yggdrasil tree built from one user's preference data. Branches represent topic clusters, leaves are individual items, and cross-links capture associations across domains.

Meet Yggdrasil: A Benchmark for Associative Memory

Named after the Norse cosmic tree that connects all realms, Yggdrasil is our benchmark pipeline for evaluating how well AI systems understand user preferences. It constructs a hierarchical tree from a user's content history, holds out entire topic branches, and tests whether a system can predict preferences in unseen domains. It measures associative transfer, not memorization.

Figure 2: Animated Yggdrasil tree. The benchmark structure emerges automatically from user engagement data.

The benchmark tree is built per-user through a four-step pipeline:

- Embed: each content item is encoded into a dense semantic vector

- Cluster: dimensionality reduction and hierarchical clustering group items into topic branches

- Link: cross-branch associations connect related items across different topic clusters

- Blot: 25% of branches are held out entirely, and each held-out item becomes a 20-option multiple-choice question with graded distractors

No fixed ontology. No manual categories. The tree structure emerges directly from data, and the benchmark tests whether systems can infer across its gaps.

The 12-Experiment Journey

We ran 12 experiments on the Yggdrasil benchmark to evaluate approaches to preference prediction. The evaluation task: given 75% of a user's content engagement history (the trained branches), predict which of 20 candidate items they would engage with next from a held-out branch. Random baseline accuracy is 5%.

Because Yggdrasil blots entire topic branches rather than random items, a system trained on Art, Science, and Cuisine preferences must predict Music preferences from content categories not observed during training. This tests associative transfer: can the system use cross-domain structure to infer into unseen territory?

Where Approaches Fell Short

Vector Databases + LLM Extraction: 6-10% accuracy

We evaluated Milvus and ChromaDB as retrieval backends, extracting memories into embeddings, constructing hybrid indices, and feeding retrieved results to LLMs. Accuracy remained near random. LLM-based extraction discards the specific details that carry predictive signal. "User likes Neil deGrasse Tyson videos about black holes" is abstracted to "User interested in astrophysics," and the discriminative information is lost.

Raw Memory Storage: 20% accuracy

Storing raw content without extraction, preserving the exact text users engaged with, doubled accuracy to 20%. This indicated that each layer of LLM processing between input data and prediction degrades the predictive signal.

LLM Summary Strategies: 4-26% accuracy

We evaluated nine summarization strategies for user preferences: personality descriptions, hierarchical summaries, weighted tags, and persona models. Each additional layer of abstraction degraded accuracy. Flat structured tags (26%) outperformed rich personality narratives (6–18%) by a wide margin.

Adding Features to the Graph Model: Always Hurt

This was the most consistent finding across four experiments:

- Adding hierarchical edges from PCA clusters: accuracy dropped from 33.2% to 27.1%

- Penalizing similarity to disliked items: accuracy dropped at every penalty level

- Adding content-appeal dimensions: accuracy dropped from 28.6% to 21.8%

- Replacing our text encoder with a multimodal one: accuracy dropped from 28.6% to 19.7%

Every auxiliary signal degraded accuracy. The graph model achieves its best performance with clean, sparse semantic similarity edges and no additional features.

Approaches That Delivered

Full Context GPT-4.1 mini: 31.4%

Providing the full liked-item history (~39K tokens) directly in GPT-4.1 mini's context window yielded 31.4% accuracy. No retrieval or summarization was applied. Only raw preference data and the prediction query were provided. This establishes a baseline for frontier LLM performance given complete information.

Full Context Grok 4.1 Fast Reasoning: 45.1%

Grok achieved the highest LLM accuracy at 45.1% using the "similarity" prompt variant, at ~$0.03 per query with a ~500B parameter MoE model. Prompt engineering shifted accuracy from 16% to 36% on identical data, demonstrating the fragility of LLM-based preference prediction.

Cognitive Preference Graph (Onairos Mind 1): 33.2%

The graph-based approach outperforms GPT-4.1 mini with 347x fewer parameters and zero inference cost.

Mem0 Memory Consolidation: 38.0%

Mem0 consolidates raw engagement items into memories via LLM calls, then uses cosine similarity retrieval to feed an LLM for prediction. It achieved 38.0%, outperforming both GPT-4.1 mini and Mind 1. The trade-off: ~1,161 LLM calls and ~93 minutes of ingestion per user, versus 50 seconds and zero API calls for Mind 1.

Zep Memory Architecture: 40.0%

Zep's temporal knowledge graph (powered by Graphiti) uses entity extraction, fact deduplication, and hybrid retrieval combining cosine similarity, BM25, and graph traversal. It produced 3,020 entity nodes and 1,797 fact edges from 1,287 items, achieving 40.0% accuracy. It edges out Mem0 by +2pp but requires ~174 minutes of total processing and thousands of LLM calls per user.

Zep was evaluated using its open-source Graphiti engine with paper-matching configuration (BGE-m3 embeddings, cross-encoder reranking, sequential ingestion). Results reflect Graphiti's publicly available implementation, not Zep Cloud's managed service.

Yggdrasil Benchmark Results

| System | Accuracy | Parameters | Cost per Query |

|---|---|---|---|

| Random | 5.0% | — | $0 |

| Full-context GPT-4.1 mini | 31.4% | ~8B | ~$0.02 |

| Onairos Mind 1 | 33.2% | ~23M (329K trainable) | $0 |

| Mem0 Memory Architecture | 38.0% | ~23M + LLM | ~$0.72 total |

| Zep Memory Architecture | 40.0% | ~23M + LLM | ~$3-5 total |

| Full-context Grok 4.1 Fast | 45.1% | ~500B | ~$0.03 |

A 23M-parameter graph model running locally on a laptop outperformed GPT-4.1 mini, which had access to full raw data in context with 347x more parameters.

347x

Fewer Parameters

$0

Inference Cost

50s

Train Time

33.2%

Accuracy

- 347x more parameter-efficient than GPT-4.1 mini (~8B parameters)

- ~21,000x smaller than Grok 4.1 Fast (~500B parameters)

- Zero cost at inference (runs entirely local)

- 4 minutes for the full benchmark on a laptop

- 50 seconds to train the entire graph

Every system that outscores Mind 1 requires orders of magnitude more resources. Mem0 needs over 1,100 LLM calls and 90 minutes of ingestion per user. Zep builds a full knowledge graph with ~174 minutes of processing and thousands of LLM calls, gaining only +2pp over Mem0. Grok 4.1 Fast requires a ~500B-parameter model at ~$0.03 per query. Mind 1 runs locally on a laptop with no API dependency.

Grok 4.1 Fast achieves the highest accuracy at 45.1% but requires a ~500B-parameter model at ~$0.03 per query. For on-device deployment at scale, this cost structure is prohibitive.

Cross-User Generalization

Figure 3: Yggdrasil benchmark trees across six users. Each tree's topology emerges uniquely from that user's engagement data, producing different branch structures and cross-link patterns.

Single-user benchmarks risk overfitting conclusions to one profile. We evaluated across six users with diverse content profiles, ranging from mixed-platform users consuming X/Twitter, YouTube, and ChatGPT content to single-platform users with concentrated topical overlap. All results use consistent formatting across correct answers and distractors.

CPG outperforms GPT-4.1 mini on diverse, multi-platform content (33.2% vs 31.4% on the primary benchmark) with 347x fewer parameters. GPT-4.1 mini performs better on users with homogeneous or single-platform content. On our largest single-platform dataset (100% X/Twitter), Enriched CPG matched GPT-4.1 mini at 38.0%. Across all six users, CPG averages 27.3% vs GPT-4.1 mini's 34.6%, with CPG operating at zero inference cost versus ~$0.02 per query.

The enrichment pipeline, classifying items with an LLM prior to graph construction, consistently elevates baseline CPG from near-random to competitive accuracy, yielding 2.6x to 11.7x improvements across users. Improved embeddings and fixed taxonomies further reduce the gap on the most difficult user profiles.

The accuracy gap to frontier LLMs remains. However, a 23M-parameter, zero-cost graph model consistently achieves 2.6–7.6x above random baseline across all tested users. The largest remaining gaps correspond to user profiles where the improvement path (better embeddings, richer taxonomies) is most direct.

How Onairos Mind 1 Works

- Encode preferences: every liked item is encoded with a lightweight sentence transformer, producing a dense vector for each piece of content.

- Build a sparse graph: items are connected by semantic similarity and shared categories, forming clusters of related preferences.

- Train a Graph Attention Network: a small

GATlearns which neighbors matter most for each node, training in seconds on a laptop. - Score by gap detection: at test time, each option is scored by how well it fills a structural "gap" in the graph. The correct answer isn't always the most similar item. It's the one that best completes an emerging pattern.

This captures a property that pure similarity matching misses: the structural role an item plays in the preference graph. An item can optimally complete a cluster missing a member without being the most similar to any individual node.

Why Sparsity Matters

A key empirical finding: increasing graph density consistently degrades performance.

| Config | Edges | Avg Degree | Accuracy |

|---|---|---|---|

| YggCPG Dense | 40,052 | 31.1 | 18.5% |

| YggCPG Default | 27,400 | 21.3 | 27.1% |

| Onairos Mind 1 | 13,942 | 10.8 | 33.2% |

When every node connects to every other node, GAT representations converge toward the mean and structural gaps disappear. Sparsity preserves the discriminative structure that gap-based scoring relies on.

This parallels biological neural connectivity, which is sparse (~0.1% of possible connections). Discriminative capacity arises from which connections exist, not from maximizing their number.

The Dominant Pattern: Less LLM, Better Results

The most consistent finding across all experiments:

| Approach | LLM Involvement | Accuracy |

|---|---|---|

| Onairos Mind 1 (Onairos Mind 1) | None | 33.2% |

| Full context GPT-4.1 mini | Full | 31.4% |

| Retrieval + LLM | Retrieval + reasoning | 18-26% |

| Mem0 consolidation + LLM | Retrieval + consolidation + reasoning | 38.0% |

| Zep knowledge graph + LLM | Entity extraction + graph retrieval + reasoning | 40.0% |

| Summary only + LLM | Summarization + reasoning | 4-16% |

Each layer of LLM processing degraded accuracy. Three mechanisms contribute:

- Information loss: LLM extraction replaces specific terms with abstractions, discarding the exact signal that carries predictive value

- Abstraction bias: LLMs override direct matching signals with abstract reasoning about "what kind of person would like this"

- Prompt fragility: Same data, same model, but changing the prompt shifts Grok's accuracy from 16% to 36%

LLMs attempt to reason about preferences where direct structural matching is more effective. The graph model learns preference structure without intermediate abstraction, and this proves sufficient.

What This Means for AI Memory Systems

- Structure over scale. A 23M-parameter system outperforms an 8B-parameter LLM on the primary benchmark. Architecture selection matters more than parameter count.

- Minimize abstraction. Summarization and extraction consistently degrade preference signals. Raw data fidelity outperforms LLM-processed representations at every level tested.

- Sparsity preserves signal. Across four experiments, increased edge density consistently reduced accuracy. Dense graphs collapse representations toward the mean, destroying the structural gaps that enable discrimination.

- Gap detection outperforms similarity. Scoring by structural completion ("what is missing?") outperforms nearest-neighbor similarity. Correct answers frequently complete structural patterns rather than maximizing pairwise similarity.

- Rigorous evaluation is essential. Auxiliary signals that appeared beneficial in isolation consistently degraded accuracy under controlled conditions. Cross-user evaluation on six real profiles is necessary to validate generalization claims.

What We're Building for You

After a dozen experiments, Onairos Mind 1 already:

- Outperforms GPT-4.1 mini at preference prediction on the primary benchmark

- Uses 347x fewer parameters

- Costs nothing at inference and runs entirely on your device

- Trains in 50 seconds, benchmarks in 4 minutes on a laptop

- Gives you a fully interpretable model of your own preferences

Grok 4.1 Fast scores higher at 45.1%, but requires a ~500B-parameter model and ~$0.03 per query. GPT-4.1 mini averages 34.6% vs CPG's 27.3% across six users. We're honest about the gap. But the trajectory is clear: structured, sparse, associative architectures can compete with models orders of magnitude larger, at zero cost to you.

Here's what comes next, and what it means for you as a user:

- Your tree grows with you. Real-time adaptation means your preference graph updates as new data arrives. Every new like, save, or interaction refines how well the system understands you.

- Everything you create becomes a memory node. Images, voice notes, and videos will all be treated as first-class data in your preference graph, not just text.

- Scaling to 100+ real users to prove cross-user generalization and close the remaining accuracy gap on single-platform content.

- Your model stays on your device. Zero API dependency means no one else can see, sell, or revoke access to how you think.

Every user gets their own tree, their own topology. A personal AI that understands your preferences because it learned their structure (hierarchical, associative, sparse) learned directly from your data.

Coming in Part 2: expanded benchmark results across a larger user base, testing in a production environment, and improved accuracy as the system matures. Stay tuned.

Key Takeaways

- Structure > Parameters: 23M beats 8B when the architecture matches the task

- Less LLM = Better Accuracy: extraction and reasoning degrade preference prediction

- Test Associations, Not Memorization: topic-based holdout reveals true capability

- Sparse Graphs Win: adding edges consistently hurts; sparsity preserves discrimination

- Gap Detection > Similarity: asking "what's missing?" beats "what's similar?"

- Zero Cost Inference: local models with no API dependency

- Evaluate Honestly: cross-user testing reveals where the system excels and where it falls short

References

[1] LOCOMO Benchmark (Snap Research, 2024): snap-research.github.io/locomo

[2] LongMemEval (2024): github.com/xiaowu0162/LongMemEval

[3] Mem0 Research & Memobase Evaluations (2025): mem0.ai/research ; memobase.io/blog/ai-memory-benchmark

Author

Zion Darko

Founder & CEO

Inventor and Dreamer and CEO.

Contributors

Satoru Gojo

Sorcerror

Magi. Self-taught. Combining magiks with machines.